Journal Description

Entropy

Entropy

is an international and interdisciplinary peer-reviewed open access journal of entropy and information studies, published monthly online by MDPI. The International Society for the Study of Information (IS4SI) and Spanish Society of Biomedical Engineering (SEIB) are affiliated with Entropy and their members receive a discount on the article processing charge.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), Inspec, PubMed, PMC, Astrophysics Data System, and other databases.

- Journal Rank: JCR - Q2 (Physics, Multidisciplinary) / CiteScore - Q1 (Mathematical Physics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 20.8 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Testimonials: See what our editors and authors say about Entropy.

- Companion journals for Entropy include: Foundations, Thermo and MAKE.

Impact Factor:

2.7 (2022);

5-Year Impact Factor:

2.6 (2022)

Latest Articles

Monte Carlo Based Techniques for Quantum Magnets with Long-Range Interactions

Entropy 2024, 26(5), 401; https://doi.org/10.3390/e26050401 - 01 May 2024

Abstract

Long-range interactions are relevant for a large variety of quantum systems in quantum optics and condensed matter physics. In particular, the control of quantum–optical platforms promises to gain deep insights into quantum-critical properties induced by the long-range nature of interactions. From a theoretical

[...] Read more.

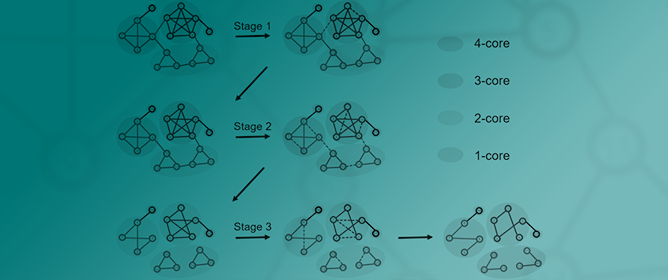

Long-range interactions are relevant for a large variety of quantum systems in quantum optics and condensed matter physics. In particular, the control of quantum–optical platforms promises to gain deep insights into quantum-critical properties induced by the long-range nature of interactions. From a theoretical perspective, long-range interactions are notoriously complicated to treat. Here, we give an overview of recent advancements to investigate quantum magnets with long-range interactions focusing on two techniques based on Monte Carlo integration. First, the method of perturbative continuous unitary transformations where classical Monte Carlo integration is applied within the embedding scheme of white graphs. This linked-cluster expansion allows extracting high-order series expansions of energies and observables in the thermodynamic limit. Second, stochastic series expansion quantum Monte Carlo integration enables calculations on large finite systems. Finite-size scaling can then be used to determine the physical properties of the infinite system. In recent years, both techniques have been applied successfully to one- and two-dimensional quantum magnets involving long-range Ising, XY, and Heisenberg interactions on various bipartite and non-bipartite lattices. Here, we summarise the obtained quantum-critical properties including critical exponents for all these systems in a coherent way. Further, we review how long-range interactions are used to study quantum phase transitions above the upper critical dimension and the scaling techniques to extract these quantum critical properties from the numerical calculations.

Full article

(This article belongs to the Special Issue Violations of Hyperscaling in Phase Transitions and Critical Phenomena—in Memory of Prof. Ralph Kenna)

Open AccessArticle

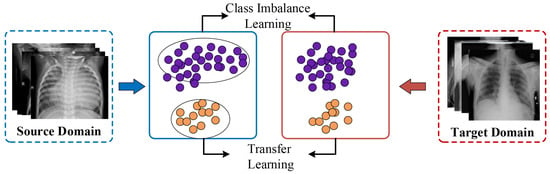

Dynamic Weighting Translation Transfer Learning for Imbalanced Medical Image Classification

by

Chenglin Yu and Hailong Pei

Entropy 2024, 26(5), 400; https://doi.org/10.3390/e26050400 - 01 May 2024

Abstract

Medical image diagnosis using deep learning has shown significant promise in clinical medicine. However, it often encounters two major difficulties in real-world applications: (1) domain shift, which invalidates the trained model on new datasets, and (2) class imbalance problems leading to model biases

[...] Read more.

Medical image diagnosis using deep learning has shown significant promise in clinical medicine. However, it often encounters two major difficulties in real-world applications: (1) domain shift, which invalidates the trained model on new datasets, and (2) class imbalance problems leading to model biases towards majority classes. To address these challenges, this paper proposes a transfer learning solution, named Dynamic Weighting Translation Transfer Learning (DTTL), for imbalanced medical image classification. The approach is grounded in information and entropy theory and comprises three modules: Cross-domain Discriminability Adaptation (CDA), Dynamic Domain Translation (DDT), and Balanced Target Learning (BTL). CDA connects discriminative feature learning between source and target domains using a synthetic discriminability loss and a domain-invariant feature learning loss. The DDT unit develops a dynamic translation process for imbalanced classes between two domains, utilizing a confidence-based selection approach to select the most useful synthesized images to create a pseudo-labeled balanced target domain. Finally, the BTL unit performs supervised learning on the reassembled target set to obtain the final diagnostic model. This paper delves into maximizing the entropy of class distributions, while simultaneously minimizing the cross-entropy between the source and target domains to reduce domain discrepancies. By incorporating entropy concepts into our framework, our method not only significantly enhances medical image classification in practical settings but also innovates the application of entropy and information theory within deep learning and medical image processing realms. Extensive experiments demonstrate that DTTL achieves the best performance compared to existing state-of-the-art methods for imbalanced medical image classification tasks.

Full article

(This article belongs to the Section Signal and Data Analysis)

►▼

Show Figures

Figure 1

Open AccessReview

Hamiltonian Computational Chemistry: Geometrical Structures in Chemical Dynamics and Kinetics

by

Stavros C. Farantos

Entropy 2024, 26(5), 399; https://doi.org/10.3390/e26050399 (registering DOI) - 30 Apr 2024

Abstract

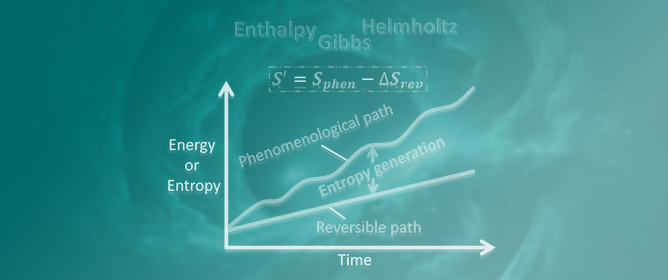

The common geometrical (symplectic) structures of classical mechanics, quantum mechanics, and classical thermodynamics are unveiled with three pictures. These cardinal theories, mainly at the non-relativistic approximation, are the cornerstones for studying chemical dynamics and chemical kinetics. Working in extended phase spaces, we show

[...] Read more.

The common geometrical (symplectic) structures of classical mechanics, quantum mechanics, and classical thermodynamics are unveiled with three pictures. These cardinal theories, mainly at the non-relativistic approximation, are the cornerstones for studying chemical dynamics and chemical kinetics. Working in extended phase spaces, we show that the physical states of integrable dynamical systems are depicted by Lagrangian submanifolds embedded in phase space. Observable quantities are calculated by properly transforming the extended phase space onto a reduced space, and trajectories are integrated by solving Hamilton’s equations of motion. After defining a Riemannian metric, we can also estimate the length between two states. Local constants of motion are investigated by integrating Jacobi fields and solving the variational linear equations. Diagonalizing the symplectic fundamental matrix, eigenvalues equal to one reveal the number of constants of motion. For conservative systems, geometrical quantum mechanics has proved that solving the Schrödinger equation in extended Hilbert space, which incorporates the quantum phase, is equivalent to solving Hamilton’s equations in the projective Hilbert space. In classical thermodynamics, we take entropy and energy as canonical variables to construct the extended phase space and to represent the Lagrangian submanifold. Hamilton’s and variational equations are written and solved in the same fashion as in classical mechanics. Solvers based on high-order finite differences for numerically solving Hamilton’s, variational, and Schrödinger equations are described. Employing the Hénon–Heiles two-dimensional nonlinear model, representative results for time-dependent, quantum, and dissipative macroscopic systems are shown to illustrate concepts and methods. High-order finite-difference algorithms, despite their accuracy in low-dimensional systems, require substantial computer resources when they are applied to systems with many degrees of freedom, such as polyatomic molecules. We discuss recent research progress in employing Hamiltonian neural networks for solving Hamilton’s equations. It turns out that Hamiltonian geometry, shared with all physical theories, yields the necessary and sufficient conditions for the mutual assistance of humans and machines in deep-learning processes.

Full article

(This article belongs to the Special Issue Kinetic Models of Chemical Reactions)

Open AccessArticle

On an Aggregated Estimate for Human Mobility Regularities through Movement Trends and Population Density

by

Fabio Vanni and David Lambert

Entropy 2024, 26(5), 398; https://doi.org/10.3390/e26050398 (registering DOI) - 30 Apr 2024

Abstract

This article introduces an analytical framework that interprets individual measures of entropy-based mobility derived from mobile phone data. We explore and analyze two widely recognized entropy metrics: random entropy and uncorrelated Shannon entropy. These metrics are estimated through collective variables of human mobility,

[...] Read more.

This article introduces an analytical framework that interprets individual measures of entropy-based mobility derived from mobile phone data. We explore and analyze two widely recognized entropy metrics: random entropy and uncorrelated Shannon entropy. These metrics are estimated through collective variables of human mobility, including movement trends and population density. By employing a collisional model, we establish statistical relationships between entropy measures and mobility variables. Furthermore, our research addresses three primary objectives: firstly, validating the model; secondly, exploring correlations between aggregated mobility and entropy measures in comparison to five economic indicators; and finally, demonstrating the utility of entropy measures. Specifically, we provide an effective population density estimate that offers a more realistic understanding of social interactions. This estimation takes into account both movement regularities and intensity, utilizing real-time data analysis conducted during the peak period of the COVID-19 pandemic.

Full article

(This article belongs to the Special Issue Modeling and Control of Epidemic Spreading in Complex Societies)

Open AccessArticle

QUBO Problem Formulation of Fragment-Based Protein–Ligand Flexible Docking

by

Keisuke Yanagisawa, Takuya Fujie, Kazuki Takabatake and Yutaka Akiyama

Entropy 2024, 26(5), 397; https://doi.org/10.3390/e26050397 (registering DOI) - 30 Apr 2024

Abstract

Protein–ligand docking plays a significant role in structure-based drug discovery. This methodology aims to estimate the binding mode and binding free energy between the drug-targeted protein and candidate chemical compounds, utilizing protein tertiary structure information. Reformulation of this docking as a quadratic unconstrained

[...] Read more.

Protein–ligand docking plays a significant role in structure-based drug discovery. This methodology aims to estimate the binding mode and binding free energy between the drug-targeted protein and candidate chemical compounds, utilizing protein tertiary structure information. Reformulation of this docking as a quadratic unconstrained binary optimization (QUBO) problem to obtain solutions via quantum annealing has been attempted. However, previous studies did not consider the internal degrees of freedom of the compound that is mandatory and essential. In this study, we formulated fragment-based protein–ligand flexible docking, considering the internal degrees of freedom of the compound by focusing on fragments (rigid chemical substructures of compounds) as a QUBO problem. We introduced four factors essential for fragment–based docking in the Hamiltonian: (1) interaction energy between the target protein and each fragment, (2) clashes between fragments, (3) covalent bonds between fragments, and (4) the constraint that each fragment of the compound is selected for a single placement. We also implemented a proof-of-concept system and conducted redocking for the protein–compound complex structure of Aldose reductase (a drug target protein) using SQBM+, which is a simulated quantum annealer. The predicted binding pose reconstructed from the best solution was near-native (

(This article belongs to the Special Issue Ising Model: Recent Developments and Exotic Applications II)

Open AccessArticle

Learning Traveling Solitary Waves Using Separable Gaussian Neural Networks

by

Siyuan Xing and Efstathios G. Charalampidis

Entropy 2024, 26(5), 396; https://doi.org/10.3390/e26050396 (registering DOI) - 30 Apr 2024

Abstract

In this paper, we apply a machine learning approach to learning traveling solitary waves across various physical systems that are described by families of partial differential equations (PDEs). Our approach integrates a novel interpretable neural network (NN) architecture called the Separable Gaussian Neural

[...] Read more.

In this paper, we apply a machine learning approach to learning traveling solitary waves across various physical systems that are described by families of partial differential equations (PDEs). Our approach integrates a novel interpretable neural network (NN) architecture called the Separable Gaussian Neural Network (SGNN) into the framework of Physics-Informed Neural Networks (PINNs). Unlike the traditional PINNs, which treat spatial and temporal data as independent inputs, the present method leverages wave characteristics to transform data into what is called the co-traveling wave frame. This adaptation effectively addresses the issue of propagation failure in PINNs when applied to large computational domains. Here, the SGNN architecture demonstrates robust approximation capabilities for single-peakon, multi-peakon, and stationary solutions (known as “leftons”) within the (1 + 1)-dimensional b-family of PDEs. In addition, we expand our investigation and explore not only peakon solutions in the

(This article belongs to the Special Issue Recent Advances in the Theory of Nonlinear Lattices)

Open AccessArticle

A Spectral Investigation of Criticality and Crossover Effects in Two and Three Dimensions: Short Timescales with Small Systems in Minute Random Matrices

by

Eliseu Venites Filho, Roberto da Silva and José Roberto Drugowich de Felício

Entropy 2024, 26(5), 395; https://doi.org/10.3390/e26050395 - 30 Apr 2024

Abstract

Random matrix theory, particularly using matrices akin to the Wishart ensemble, has proven successful in elucidating the thermodynamic characteristics of critical behavior in spin systems across varying interaction ranges. This paper explores the applicability of such methods in investigating critical phenomena and the

[...] Read more.

Random matrix theory, particularly using matrices akin to the Wishart ensemble, has proven successful in elucidating the thermodynamic characteristics of critical behavior in spin systems across varying interaction ranges. This paper explores the applicability of such methods in investigating critical phenomena and the crossover to tricritical points within the Blume–Capel model. Through an analysis of eigenvalue mean, dispersion, and extrema statistics, we demonstrate the efficacy of these spectral techniques in characterizing critical points in both two and three dimensions. Crucially, we propose a significant modification to this spectral approach, which emerges as a versatile tool for studying critical phenomena. Unlike traditional methods that eschew diagonalization, our method excels in handling short timescales and small system sizes, widening the scope of inquiry into critical behavior.

Full article

(This article belongs to the Special Issue Random Matrix Theory and Its Innovative Applications)

Open AccessFeature PaperArticle

A Joint Communication and Computation Design for Probabilistic Semantic Communications

by

Zhouxiang Zhao, Zhaohui Yang, Mingzhe Chen, Zhaoyang Zhang and H. Vincent Poor

Entropy 2024, 26(5), 394; https://doi.org/10.3390/e26050394 (registering DOI) - 30 Apr 2024

Abstract

In this paper, the problem of joint transmission and computation resource allocation for a multi-user probabilistic semantic communication (PSC) network is investigated. In the considered model, users employ semantic information extraction techniques to compress their large-sized data before transmitting them to a multi-antenna

[...] Read more.

In this paper, the problem of joint transmission and computation resource allocation for a multi-user probabilistic semantic communication (PSC) network is investigated. In the considered model, users employ semantic information extraction techniques to compress their large-sized data before transmitting them to a multi-antenna base station (BS). Our model represents large-sized data through substantial knowledge graphs, utilizing shared probability graphs between the users and the BS for efficient semantic compression. The resource allocation problem is formulated as an optimization problem with the objective of maximizing the sum of the equivalent rate of all users, considering the total power budget and semantic resource limit constraints. The computation load considered in the PSC network is formulated as a non-smooth piecewise function with respect to the semantic compression ratio. To tackle this non-convex non-smooth optimization challenge, a three-stage algorithm is proposed, where the solutions for the received beamforming matrix of the BS, the transmit power of each user, and the semantic compression ratio of each user are obtained stage by stage. The numerical results validate the effectiveness of our proposed scheme.

Full article

(This article belongs to the Special Issue Foundations of Goal-Oriented Semantic Communication in Intelligent Networks)

Open AccessArticle

Underwriter Discourse, IPO Profit Distribution, and Audit Quality: An Entropy Study from the Perspective of an Underwriter–Auditor Network

by

Songling Yang, Yafei Tai, Yu Cao, Yunzhu Chen and Qiuyue Zhang

Entropy 2024, 26(5), 393; https://doi.org/10.3390/e26050393 (registering DOI) - 30 Apr 2024

Abstract

Underwriters play a pivotal role in the IPO process. Information entropy, a tool for measuring the uncertainty and complexity of information, has been widely applied to various issues in complex networks. Information entropy can quantify the uncertainty and complexity of nodes in the

[...] Read more.

Underwriters play a pivotal role in the IPO process. Information entropy, a tool for measuring the uncertainty and complexity of information, has been widely applied to various issues in complex networks. Information entropy can quantify the uncertainty and complexity of nodes in the network, providing a unique analytical perspective and methodological support for this study. This paper employs a bipartite network analysis method to construct the relationship network between underwriters and accounting firms, using the centrality of underwriters in the network as a measure of their influence to explore the impact of underwriters’ influence on the distribution of interests and audit outcomes. The findings indicate that a more pronounced influence of underwriters significantly increases the ratio of underwriting fees to audit fees. Higher influence often accompanies an increase in abnormal underwriting fees. Further research reveals that companies underwritten by more influential underwriters experience a decline in audit quality. Finally, the study reveals that a well-structured audit committee governance and the rationalization of market sentiments can mitigate the negative impacts of underwriters’ influence. The innovation of this paper is that it enriches the content related to underwriters by constructing the relationship network between underwriters and accounting firms for the first time using a bipartite network through the lens of information entropy. This conclusion provides new directions for thinking about the motives and possibilities behind financial institutions’ cooperation, offering insights for market regulation and policy formulation.

Full article

(This article belongs to the Special Issue Complexity in Financial Networks)

Open AccessArticle

Lévy Flight Model of Gaze Trajectories to Assist in ADHD Diagnoses

by

Christos Papanikolaou, Akriti Sharma, Pedro G. Lind and Pedro Lencastre

Entropy 2024, 26(5), 392; https://doi.org/10.3390/e26050392 (registering DOI) - 30 Apr 2024

Abstract

The precise mathematical description of gaze patterns remains a topic of ongoing debate, impacting the practical analysis of eye-tracking data. In this context, we present evidence supporting the appropriateness of a Lévy flight description for eye-gaze trajectories, emphasizing its beneficial scale-invariant properties. Our

[...] Read more.

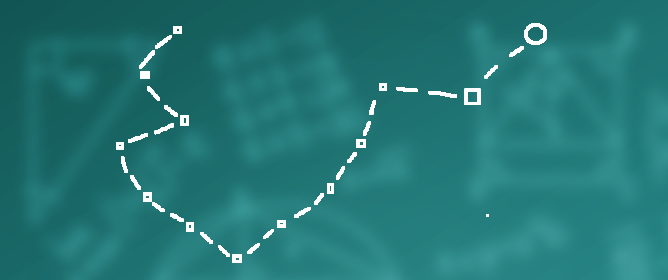

The precise mathematical description of gaze patterns remains a topic of ongoing debate, impacting the practical analysis of eye-tracking data. In this context, we present evidence supporting the appropriateness of a Lévy flight description for eye-gaze trajectories, emphasizing its beneficial scale-invariant properties. Our study focuses on utilizing these properties to aid in diagnosing Attention-Deficit and Hyperactivity Disorder (ADHD) in children, in conjunction with standard cognitive tests. Using this method, we found that the distribution of the characteristic exponent of Lévy flights statistically is different in children with ADHD. Furthermore, we observed that these children deviate from a strategy that is considered optimal for searching processes, in contrast to non-ADHD children. We focused on the case where both eye-tracking data and data from a cognitive test are present and show that the study of gaze patterns in children with ADHD can help in identifying this condition. Since eye-tracking data can be gathered during cognitive tests without needing extra time-consuming specific tasks, we argue that it is in a prime position to provide assistance in the arduous task of diagnosing ADHD.

Full article

(This article belongs to the Special Issue Stochastic Thermodynamics of Microscopic Systems)

Open AccessArticle

Thermodynamic Entropy-Based Fatigue Life Assessment Method for Nickel-Based Superalloy GH4169 at Elevated Temperature Considering Cyclic Viscoplasticity

by

Shuiting Ding, Shuyang Xia, Zhenlei Li, Huimin Zhou, Shaochen Bao, Bolin Li and Guo Li

Entropy 2024, 26(5), 391; https://doi.org/10.3390/e26050391 (registering DOI) - 30 Apr 2024

Abstract

This paper develops a thermodynamic entropy-based life prediction model to estimate the low-cycle fatigue (LCF) life of the nickel-based superalloy GH4169 at elevated temperature (650 °C). The gauge section of the specimen was chosen as the thermodynamic system for modeling entropy generation within

[...] Read more.

This paper develops a thermodynamic entropy-based life prediction model to estimate the low-cycle fatigue (LCF) life of the nickel-based superalloy GH4169 at elevated temperature (650 °C). The gauge section of the specimen was chosen as the thermodynamic system for modeling entropy generation within the framework of the Chaboche viscoplasticity constitutive theory. Furthermore, an explicitly numerical integration algorithm was compiled to calculate the cyclic stress–strain responses and thermodynamic entropy generation for establishing the framework for fatigue life assessment. A thermodynamic entropy-based life prediction model is proposed with a damage parameter based on entropy generation considering the influence of loading ratio. Fatigue lives for GH4169 at 650 °C under various loading conditions were estimated utilizing the proposed model, and the results showed good consistency with the experimental results. Finally, compared to the existing classical models, such as Manson–Coffin, Ostergren, Walker strain, and SWT, the thermodynamic entropy-based life prediction model provided significantly better life prediction results.

Full article

Open AccessArticle

A Study of Adjacent Intersection Correlation Based on Temporal Graph Attention Network

by

Pengcheng Li, Baotian Dong and Sixian Li

Entropy 2024, 26(5), 390; https://doi.org/10.3390/e26050390 - 30 Apr 2024

Abstract

Traffic state classification and relevance calculation at intersections are both difficult problems in traffic control. In this paper, we propose an intersection relevance model based on a temporal graph attention network, which can solve the above two problems at the same time. First,

[...] Read more.

Traffic state classification and relevance calculation at intersections are both difficult problems in traffic control. In this paper, we propose an intersection relevance model based on a temporal graph attention network, which can solve the above two problems at the same time. First, the intersection features and interaction time of the intersections are regarded as input quantities together with the initial labels of the traffic data. Then, they are inputted into the temporal graph attention (TGAT) model to obtain the classification accuracy of the target intersections in four states—free, stable, slow moving, and congested—and the obtained neighbouring intersection weights are used as the correlation between the intersections. Finally, it is validated by VISSIM simulation experiments. In terms of classification accuracy, the TGAT model has a higher classification accuracy than the three traditional classification models and can cope well with the uneven distribution of the number of samples. The information gain algorithm from the information entropy theory was used to derive the average delay as the most influential factor on intersection status. The correlation from the TGAT model positively correlates with traffic flow, making it interpretable. Using this correlation to control the division of subareas improves the road network’s operational efficiency more than the traditional correlation model does. This demonstrates the effectiveness of the TGAT model’s correlation.

Full article

(This article belongs to the Special Issue Information-Theoretic Methods in Data Analytics)

Open AccessArticle

The Inverse of Exact Renormalization Group Flows as Statistical Inference

by

David S. Berman and Marc S. Klinger

Entropy 2024, 26(5), 389; https://doi.org/10.3390/e26050389 - 30 Apr 2024

Abstract

We build on the view of the Exact Renormalization Group (ERG) as an instantiation of Optimal Transport described by a functional convection–diffusion equation. We provide a new information-theoretic perspective for understanding the ERG through the intermediary of Bayesian Statistical Inference. This connection is

[...] Read more.

We build on the view of the Exact Renormalization Group (ERG) as an instantiation of Optimal Transport described by a functional convection–diffusion equation. We provide a new information-theoretic perspective for understanding the ERG through the intermediary of Bayesian Statistical Inference. This connection is facilitated by the Dynamical Bayesian Inference scheme, which encodes Bayesian inference in the form of a one-parameter family of probability distributions solving an integro-differential equation derived from Bayes’ law. In this note, we demonstrate how the Dynamical Bayesian Inference equation is, itself, equivalent to a diffusion equation, which we dub Bayesian Diffusion. By identifying the features that define Bayesian Diffusion and mapping them onto the features that define the ERG, we obtain a dictionary outlining how renormalization can be understood as the inverse of statistical inference.

Full article

(This article belongs to the Special Issue Applications of Fisher Information in Sciences II)

Open AccessArticle

A Spatiotemporal Probabilistic Graphical Model Based on Adaptive Expectation-Maximization Attention for Individual Trajectory Reconstruction Considering Incomplete Observations

by

Xuan Sun, Jianyuan Guo, Yong Qin, Xuanchuan Zheng, Shifeng Xiong, Jie He, Qi Sun and Limin Jia

Entropy 2024, 26(5), 388; https://doi.org/10.3390/e26050388 - 30 Apr 2024

Abstract

Spatiotemporal information on individual trajectories in urban rail transit is important for operational strategy adjustment, personalized recommendation, and emergency command decision-making. However, due to the lack of journey observations, it is difficult to accurately infer unknown information from trajectories based only on AFC

[...] Read more.

Spatiotemporal information on individual trajectories in urban rail transit is important for operational strategy adjustment, personalized recommendation, and emergency command decision-making. However, due to the lack of journey observations, it is difficult to accurately infer unknown information from trajectories based only on AFC and AVL data. To address the problem, this paper proposes a spatiotemporal probabilistic graphical model based on adaptive expectation maximization attention (STPGM-AEMA) to achieve the reconstruction of individual trajectories. The approach consists of three steps: first, the potential train alternative set and the egress time alternative set of individuals are obtained through data mining and combinatorial enumeration. Then, global and local potential variables are introduced to construct a spatiotemporal probabilistic graphical model, provide the inference process for unknown events, and state information about individual trajectories. Further, considering the effect of missing data, an attention mechanism-enhanced expectation-maximization algorithm is proposed to achieve maximum likelihood estimation of individual trajectories. Finally, typical datasets of origin-destination pairs and actual individual trajectory tracking data are used to validate the effectiveness of the proposed method. The results show that the STPGM-AEMA method is more than 95% accurate in recovering missing information in the observed data, which is at least 15% more accurate than the traditional methods (i.e., PTAM-MLE and MPTAM-EM).

Full article

(This article belongs to the Section Signal and Data Analysis)

Open AccessArticle

On the Accurate Estimation of Information-Theoretic Quantities from Multi-Dimensional Sample Data

by

Manuel Álvarez Chaves, Hoshin V. Gupta, Uwe Ehret and Anneli Guthke

Entropy 2024, 26(5), 387; https://doi.org/10.3390/e26050387 - 30 Apr 2024

Abstract

Using information-theoretic quantities in practical applications with continuous data is often hindered by the fact that probability density functions need to be estimated in higher dimensions, which can become unreliable or even computationally unfeasible. To make these useful quantities more accessible, alternative approaches

[...] Read more.

Using information-theoretic quantities in practical applications with continuous data is often hindered by the fact that probability density functions need to be estimated in higher dimensions, which can become unreliable or even computationally unfeasible. To make these useful quantities more accessible, alternative approaches such as binned frequencies using histograms and k-nearest neighbors (k-NN) have been proposed. However, a systematic comparison of the applicability of these methods has been lacking. We wish to fill this gap by comparing kernel-density-based estimation (KDE) with these two alternatives in carefully designed synthetic test cases. Specifically, we wish to estimate the information-theoretic quantities: entropy, Kullback–Leibler divergence, and mutual information, from sample data. As a reference, the results are compared to closed-form solutions or numerical integrals. We generate samples from distributions of various shapes in dimensions ranging from one to ten. We evaluate the estimators’ performance as a function of sample size, distribution characteristics, and chosen hyperparameters. We further compare the required computation time and specific implementation challenges. Notably, k-NN estimation tends to outperform other methods, considering algorithmic implementation, computational efficiency, and estimation accuracy, especially with sufficient data. This study provides valuable insights into the strengths and limitations of the different estimation methods for information-theoretic quantities. It also highlights the significance of considering the characteristics of the data, as well as the targeted information-theoretic quantity when selecting an appropriate estimation technique. These findings will assist scientists and practitioners in choosing the most suitable method, considering their specific application and available data. We have collected the compared estimation methods in a ready-to-use open-source Python 3 toolbox and, thereby, hope to promote the use of information-theoretic quantities by researchers and practitioners to evaluate the information in data and models in various disciplines.

Full article

(This article belongs to the Special Issue Approximate Entropy and Its Application)

Open AccessArticle

On the Dimensions of Hermitian Subfield Subcodes from Higher-Degree Places

by

Sabira El Khalfaoui and Gábor P. Nagy

Entropy 2024, 26(5), 386; https://doi.org/10.3390/e26050386 - 30 Apr 2024

Abstract

The focus of our research is the examination of Hermitian curves over finite fields, specifically concentrating on places of degree three and their role in constructing Hermitian codes. We begin by studying the structure of the Riemann–Roch space associated with these degree-three places,

[...] Read more.

The focus of our research is the examination of Hermitian curves over finite fields, specifically concentrating on places of degree three and their role in constructing Hermitian codes. We begin by studying the structure of the Riemann–Roch space associated with these degree-three places, aiming to determine essential characteristics such as the basis. The investigation then turns to Hermitian codes, where we analyze both functional and differential codes of degree-three places, focusing on their parameters and automorphisms. In addition, we explore the study of subfield subcodes and trace codes, determining their structure by giving lower bounds for their dimensions. This presents a complex problem in coding theory. Based on numerical experiments, we formulate a conjecture for the dimension of some subfield subcodes of Hermitian codes. Our comprehensive exploration seeks to deepen the understanding of Hermitian codes and their associated subfield subcodes related to degree-three places, thus contributing to the advancement of algebraic coding theory and code-based cryptography.

Full article

(This article belongs to the Special Issue Discrete Math in Coding Theory)

Open AccessArticle

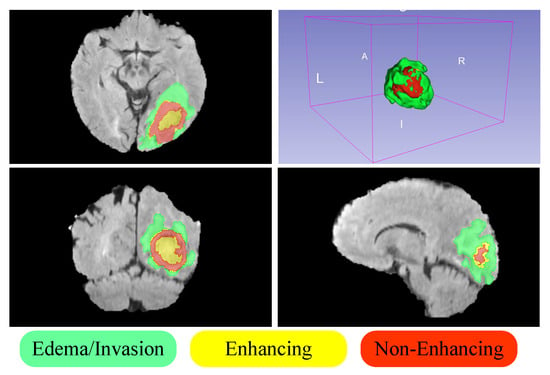

Cascade Residual Multiscale Convolution and Mamba-Structured UNet for Advanced Brain Tumor Image Segmentation

by

Rui Zhou, Ju Wang, Guijiang Xia, Jingyang Xing, Hongming Shen and Xiaoyan Shen

Entropy 2024, 26(5), 385; https://doi.org/10.3390/e26050385 - 30 Apr 2024

Abstract

►▼

Show Figures

In brain imaging segmentation, precise tumor delineation is crucial for diagnosis and treatment planning. Traditional approaches include convolutional neural networks (CNNs), which struggle with processing sequential data, and transformer models that face limitations in maintaining computational efficiency with large-scale data. This study introduces

[...] Read more.

In brain imaging segmentation, precise tumor delineation is crucial for diagnosis and treatment planning. Traditional approaches include convolutional neural networks (CNNs), which struggle with processing sequential data, and transformer models that face limitations in maintaining computational efficiency with large-scale data. This study introduces MambaBTS: a model that synergizes the strengths of CNNs and transformers, is inspired by the Mamba architecture, and integrates cascade residual multi-scale convolutional kernels. The model employs a mixed loss function that blends dice loss with cross-entropy to refine segmentation accuracy effectively. This novel approach reduces computational complexity, enhances the receptive field, and demonstrates superior performance for accurately segmenting brain tumors in MRI images. Experiments on the MICCAI BraTS 2019 dataset show that MambaBTS achieves dice coefficients of 0.8450 for the whole tumor (WT), 0.8606 for the tumor core (TC), and 0.7796 for the enhancing tumor (ET) and outperforms existing models in terms of accuracy, computational efficiency, and parameter efficiency. These results underscore the model’s potential to offer a balanced, efficient, and effective segmentation method, overcoming the constraints of existing models and promising significant improvements in clinical diagnostics and planning.

Full article

Figure 1

Open AccessArticle

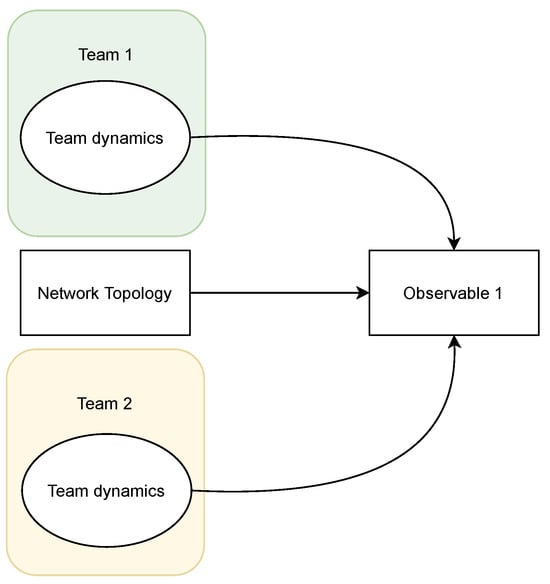

CCTFv2: Modeling Cyber Competitions

by

Basheer Qolomany, Tristan J. Calay, Liaquat Hossain, Aos Mulahuwaish and Jacques Bou Abdo

Entropy 2024, 26(5), 384; https://doi.org/10.3390/e26050384 - 30 Apr 2024

Abstract

Cyber competitions are usually team activities, where team performance not only depends on the members’ abilities but also on team collaboration. This seems intuitive, especially given that team formation is a well-studied discipline in competitive sports and project management, but unfortunately, team performance

[...] Read more.

Cyber competitions are usually team activities, where team performance not only depends on the members’ abilities but also on team collaboration. This seems intuitive, especially given that team formation is a well-studied discipline in competitive sports and project management, but unfortunately, team performance and team formation strategies are rarely studied in the context of cybersecurity and cyber competitions. Since cyber competitions are becoming more prevalent and organized, this gap becomes an opportunity to formalize the study of team performance in the context of cyber competitions. This work follows a cross-validating two-approach methodology. The first is the computational modeling of cyber competitions using Agent-Based Modeling. Team members are modeled, in NetLogo, as collaborating agents competing over a network in a red team/blue team match. Members’ abilities, team interaction and network properties are parametrized (inputs), and the match score is reported as output. The second approach is grounded in the literature of team performance (not in the context of cyber competitions), where a theoretical framework is built in accordance with the literature. The results of the first approach are used to build a causal inference model using Structural Equation Modeling. Upon comparing the causal inference model to the theoretical model, they showed high resemblance, and this cross-validated both approaches. Two main findings are deduced: first, the body of literature studying teams remains valid and applicable in the context of cyber competitions. Second, coaches and researchers can test new team strategies computationally and achieve precise performance predictions. The targeted gap used methodology and findings which are novel to the study of cyber competitions.

Full article

(This article belongs to the Special Issue An Entropy Approach to the Structure and Performance of Interdependent Autonomous Human Machine Teams and Systems (A-HMT-S))

►▼

Show Figures

Figure 1

Open AccessArticle

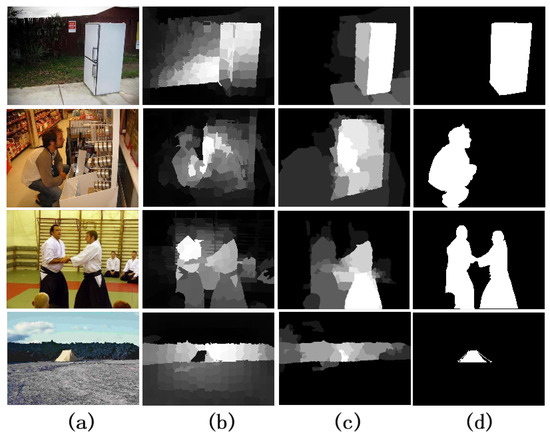

Saliency Detection Based on Multiple-Level Feature Learning

by

Xiaoli Li, Yunpeng Liu and Huaici Zhao

Entropy 2024, 26(5), 383; https://doi.org/10.3390/e26050383 - 30 Apr 2024

Abstract

►▼

Show Figures

Finding the most interesting areas of an image is the aim of saliency detection. Conventional methods based on low-level features rely on biological cues like texture and color. These methods, however, have trouble with processing complicated or low-contrast images. In this paper, we

[...] Read more.

Finding the most interesting areas of an image is the aim of saliency detection. Conventional methods based on low-level features rely on biological cues like texture and color. These methods, however, have trouble with processing complicated or low-contrast images. In this paper, we introduce a deep neural network-based saliency detection method. First, using semantic segmentation, we construct a pixel-level model that gives each pixel a saliency value depending on its semantic category. Next, we create a region feature model by combining both hand-crafted and deep features, which extracts and fuses the local and global information of each superpixel region. Third, we combine the results from the previous two steps, along with the over-segmented superpixel images and the original images, to construct a multi-level feature model. We feed the model into a deep convolutional network, which generates the final saliency map by learning to integrate the macro and micro information based on the pixels and superpixels. We assess our method on five benchmark datasets and contrast it against 14 state-of-the-art saliency detection algorithms. According to the experimental results, our method performs better than the other methods in terms of F-measure, precision, recall, and runtime. Additionally, we analyze the limitations of our method and propose potential future developments.

Full article

Figure 1

Open AccessArticle

Chip-Based Electronic System for Quantum Key Distribution

by

Siyuan Zhang, Wei Mao, Shaobo Luo and Shihai Sun

Entropy 2024, 26(5), 382; https://doi.org/10.3390/e26050382 - 29 Apr 2024

Abstract

Quantum Key Distribution (QKD) has garnered significant attention due to its unconditional security based on the fundamental principles of quantum mechanics. While QKD has been demonstrated by various groups and commercial QKD products are available, the development of a fully chip-based QKD system,

[...] Read more.

Quantum Key Distribution (QKD) has garnered significant attention due to its unconditional security based on the fundamental principles of quantum mechanics. While QKD has been demonstrated by various groups and commercial QKD products are available, the development of a fully chip-based QKD system, aimed at reducing costs, size, and power consumption, remains a significant technological challenge. Most researchers focus on the optical aspects, leaving the integration of the electronic components largely unexplored. In this paper, we present the design of a fully integrated electrical control chip for QKD applications. The chip, fabricated using 28 nm CMOS technology, comprises five main modules: an ARM processor for digital signal processing, delay cells for timing synchronization, ADC for sampling analog signals from monitors, OPAMP for signal amplification, and DAC for generating the required voltage for phase or intensity modulators. According to the simulations, the minimum delay is 11ps, the open-loop gain of the operational amplifier is 86.2 dB, the sampling rate of the ADC reaches 50 MHz, and the DAC achieves a high rate of 100 MHz. To the best of our knowledge, this marks the first design and evaluation of a fully integrated driver chip for QKD, holding the potential to significantly enhance QKD system performance. Thus, we believe our work could inspire future investigations toward the development of more efficient and reliable QKD systems.

Full article

(This article belongs to the Special Issue Progress in Quantum Key Distribution)

Journal Menu

► ▼ Journal Menu-

- Entropy Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Video Exhibition

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Vol. 26 (2024)

- Vol. 25 (2023)

- Vol. 24 (2022)

- Vol. 23 (2021)

- Vol. 22 (2020)

- Vol. 21 (2019)

- Vol. 20 (2018)

- Vol. 19 (2017)

- Vol. 18 (2016)

- Vol. 17 (2015)

- Vol. 16 (2014)

- Vol. 15 (2013)

- Vol. 14 (2012)

- Vol. 13 (2011)

- Vol. 12 (2010)

- Vol. 11 (2009)

- Vol. 10 (2008)

- Vol. 9 (2007)

- Vol. 8 (2006)

- Vol. 7 (2005)

- Vol. 6 (2004)

- Vol. 5 (2003)

- Vol. 4 (2002)

- Vol. 3 (2001)

- Vol. 2 (2000)

- Vol. 1 (1999)

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Computation, Entropy, Fractal Fract, MCA

Analytical and Numerical Methods for Stochastic Biological Systems

Topic Editors: Mehmet Yavuz, Necati Ozdemir, Mouhcine Tilioua, Yassine SabbarDeadline: 10 May 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Education Sciences, Entropy, JAL, Societies, Sustainability

Sustainability in Aging and Depopulation Societies

Topic Editors: Shiro Horiuchi, Gregor Wolbring, Takeshi MatsudaDeadline: 15 June 2024

Topic in

Buildings, Energies, Entropy, Resources, Sustainability

Advances in Solar Heating and Cooling

Topic Editors: Salvatore Vasta, Sotirios Karellas, Marina Bonomolo, Alessio Sapienza, Uli JakobDeadline: 30 June 2024

Conferences

22–26 November 2024

2024 International Conference on Science and Engineering of Electronics (ICSEE'2024) Wuhan, China

28–31 May 2024

XXII Conference on Non-equilibrium Statistical Mechanics and Nonlinear Physics—MEDYFINOL 2024

Special Issues

Special Issue in

Entropy

Information Theory for Interpretable Machine Learning

Guest Editors: Marco Piangerelli, Sotiris KotsiantisDeadline: 15 May 2024

Special Issue in

Entropy

Entropy, Statistical Evidence, and Scientific Inference: Evidence Functions in Theory and Applications

Guest Editors: Brian Dennis, Mark L. Taper, Jose Miguel PoncianoDeadline: 31 May 2024

Special Issue in

Entropy

Nonlinear Dynamics in Cardiovascular Signals

Guest Editor: Claudia LermaDeadline: 15 June 2024

Special Issue in

Entropy

Non-equilibrium Thermodynamics

Guest Editors: Duc Nguyen-Manh, Abraham MarmurDeadline: 30 June 2024

Topical Collections

Topical Collection in

Entropy

Algorithmic Information Dynamics: A Computational Approach to Causality from Cells to Networks

Collection Editors: Hector Zenil, Felipe Abrahão

Topical Collection in

Entropy

Wavelets, Fractals and Information Theory

Collection Editor: Carlo Cattani

Topical Collection in

Entropy

Entropy in Image Analysis

Collection Editor: Amelia Carolina Sparavigna